How to Fix Broken Links in Umbraco

A link that does not work any more is called a broken link, dead link, or dangling link. Formally, this is a form of dangling reference: The target of the reference no longer exists. One of the most common reasons for a broken link is that the web page to which it points no longer exists. This frequently results in a 404 error, which indicates that the web server responded but the specific page could not be found. Many errors that will be face if you choose wrong hosting provider. To avoid you with difficult errors, I suggest ASPHostPortal.com as your provider. I use them until now and never errors happened with my site.

In Umbraco, SEO Checker is a great package we use with just about all our Umbraco CMS builds, it does everything you want it to do and well. Client like it too but its not a install and forget product you need to do a little love to get it right before you hand it over to the client.

Some packages you just install by defacto, they just work and make sense to have on a site. SEO Checker is one of those packages. It does need some setting up though to get it tuned to work best for your site. That inital setup is best covered in the docs but here are some of our thoughts from using it in the field to improve SEO and the over all health of our clients sites.

We’ve recently installed it on two exisiting sites for customers. This is quite straight forward but needs a little setup and the odd template change to get title tags etc. rendering out properly.

Our aim once we hand over the site with SEO Checker installed is to have it all setup so the clients content editors can jump on it and put right anything wrong with the content. They know the content better than us and know what they want it to do, link to, etc. So we don’t clean the content but we do clean up any template quirks and try to help them see the wood for the trees by removing any red herrings so they can concentrate and get stuff done.

Once we’ve got SEO Checker installed I like to run a link checker over the whole site, I use Xenu link checker for this (odd though it is what with all the alien stuff on it) I’ve yet to find another thats as fast or easy to use even after all these years. This will populate a very important report within SEO Checker, the broken link report. This will now list every broken link.

At this point I recommend you spend a little time going through the list, you would be amazed how many of these broken links might have been there for ages yet are simple little fixes. At first glance we have 600 broken links! Turns out 200 of these were broken references to favicon and an apple icon in the main template, and easy fix and 1/3 of the list cleared already.

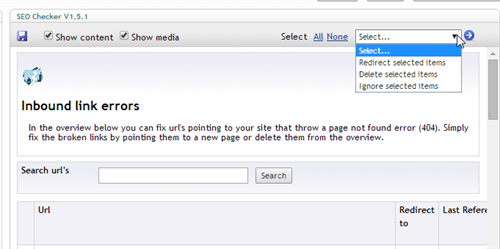

But how to remove them from the report? Luckily there is an easy search function up the top, simply add “favicon” and it lists all the pages with that url. Sadly (or luckily depending on the size of your site I guess) it pages them so you can only select 50 at a time. But from there you can tell SEO Checker to delete them or ignore them. As this was a template quirk I wanted to delete them as they should never return. One gotcha is to remember to click the round blue arrow to actually perform the action rather than the save button!

Other issues jumped out as clear template quirks, a website url that was not having “http://” pre-pended to it for instance causing “ourdomain.com/pete/ourdomain.com/pete” style links. Again an easy fix and one we needed to do as it was a template fix not a content issue and another 100 errors banished, also deleted.

While we did all this the live site we got scanned by a bot looking for ways into the backend by scanning for the usual list of Word Press admin pages. This was actually a favour, it prepopulated our report with common spam bot urls that would always cause borken links to be reported. These are legit broken links, the bot is requesting pages that don’t exist on our system (but might on other CMS sites) so they show up in the report. The good news is we can ignore these rules once and they should never show up again if we get scanned again in the future (you might want to know when you are getting scanned but it happens so often I’d suggest using another tool to monitor that rather than SEO Checker).

All the “attack” links came from the same domain, sadly there is no easy way to select all links from a referrer yet (its on Richards todo list though). So I had to get inventive and search for url patterns that allowed me to get the bulk of them removed and ignored. As a starting point you can search for these and ignore them all (ignoring removes them from the list and stops them popping up again regardless of referrer, sweet).

/template

/api

/admin

.jsp

.sql

.php

.cgi

.txt

.asp (careful, do you have a mixed asp/.net site?)

.xml (careful with this one, you might actually have some xml files you want/need, check the referrer each time)

That was another 400 links dissappeared. I’ve suggested to Richard a way of possibly added these sort of links via an import script or similar to limit the amount of “noise” these bots can cause. We know its just bots but clients might not so if we can limit it we should.

Back to the action, once I’d cleaned all the obvious errors that I could fix I ended up with 64 links that needed some attention that was out of my control ie content issues. Which is were both I and the client wanted us to get to. With that done I can point the client at the broken link report and say “crack on”.

From there they can either fix hardcoded links, select content they want urls to redirect to right within SEO Checker or just retire pages/links that are no longer needed. All in all thats helping them do their job which everyone likes.

On a side note when revisiting an older site it amazing me how differently we built things. Even sites we did a year ago are different to how we would do them now, times change. On these two legacy sites (both over 4 years old) we had very little doc type inheritence so we have custom fields for browser title, meta description, meta keywords (yes it was insisted we add this in). Some of these fields differ slightly depending on the page so they don’t all have the same alias. I thought this was going to be a pain but in SEO Checker you can actually map different fields to be your browser title, description, etc. on a per doc type basis. Now that is one clever bit of thinking. Took a little while to setup but I was surprised and pleased to see that level of config available out the box.